Introduction

This article is part of a presentation for The Associated Press, 2013 Technology Summit.

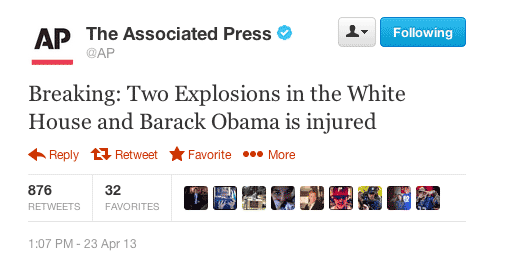

On April 23, 2013 the stock market experienced one of its biggest flash-crash drops of the year, with the Dow Jones industrial average falling 143 points (over 1%) in a matter of minutes. Unlike the 2012 stock market blip, this one wasn’t caused by an individual trade, but rather by a single tweet from the Associated Press (AP) account on the social network, Twitter. The tweet, of course, wasn’t written by AP, but rather by an imposter who had temporarily gained control of the account. Considering the impact of real-time messaging services, such as Twitter, could it be possible to detect the tweet as hacked?

In this article, we’ll discuss how to use machine learning and so-called “big data” analysis to mine large amounts of information and classify meaningful relationships from them. In particular, we’ll walk through a prototype machine learning example that attempts to classify tweets as having been authored by AP or not. We’ll examine learning curves to see how they help validate machine learning algorithms and models. As a final test, we’ll run the program on the hacked tweet and see if it’s able to successfully classify the tweet as being authentic or hacked.

The Suspect

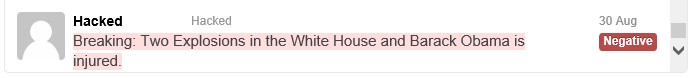

1:07 PM - 23 Apr 13 Breaking: Two Explosions in the White House and Barack Obama is injured.

Can It Be Done?

Detecting a tweet as being authentic or hacked, is really a question of authorship. Before beginning with a model, the first question to ask oneself is, does enough data exist within the hacked tweet to indicate authenticity?

A human looking at the original tweet, shown above, might easily assume this is legitimate. The tweet appears to use language that is common among AP’s history of tweets. It’s typical for a headline to begin with the phrase “Breaking:”, followed by a description.

However, upon looking closer, those familiar with AP’s language and terminology may be able to see anomalies within the tweet. The first issue is the casing of the term “Breaking”. Traditionally, AP uses the capitalized version “BREAKING” to announce timely news. (In our machine learning prototype, we’ll actually ignore case, therefore we’ll require other artifacts from the tweet to indicate authorship).

In addition the other casing anomalies within the text, there is also an unusual combination of the phrases “Two Explosions in the White House” + “and” + “Barack Obama is injured”. Specifically, the subject phrases and usage of the term “and” seems out of place.

It seems possible for some determination to be made that the target tweet may indeed not be from the original author. Putting aside human analysis, let’s give the computer a try. We’ll attempt to use a machine learning algorithm to see if it can correctly classify AP’s tweets.

Why, Hello There, Twitter

The foremost important part of a machine learning solution is the amount and quality of data to base learning upon. To classify AP’s tweets by authorship, we’ll need to extract tweets from AP’s Twitter account history to serve as the positive cases. We’ll also need a collection of non-AP tweets to serve as the negative cases.

To aid in the collection of tweets, the C# .NET library TweetSharp was used. Queries were initially prepared to extract AP tweets, using the search term “from:AP”, and later refined to include date ranges.

Extracting Tweets

The following is an example of C# .NET code, using TweetSharp, to extract recent AP tweets. The first step is to automatically login to the Twitter service:

1 | // Step 1 - Retrieve an OAuth Request Token |

Once logged in, tweet extraction can begin, as follows:

1 | public static IEnumerable<TwitterStatus> Search(string keyword, int count, |

The above code attempts to retrieve a set number of Tweets. We ignore retweets (indicated by a tweet starting with “RT”). We also cleanse tweets to remove newlines, tabs, and duplicates.

Since TweetSharp has a limit of 200 results per query, we need to continually loop, until the count has filled. Note, Twitter also has rate-limiting, which is why a check is included on the resulting status code to see if we should pause querying for a duration of time.

The results from TweetSharp are then saved to a CSV format file, using the C# .NET library CsvHelper.

While TweetSharp worked quite well for extracting a limited history of tweets, the API is apparently limited by how far back in time tweets may be extracted from. This would leave us with about 1,100 data examples to train on. For a more optimal scenario, we could use a lot more data. Note, initial trainings on this minimal data-set actually achieved 94% accuracy, although the learning charts indicated a higher accuracy could be achieved with more data.

It’s Not Who Has The Best Algorithm That Wins

As the traditional phrase in machine learning describes: “it’s not who has the best algorithm that wins; it’s who has the most data”. Therefore, more data was obtained through various data sources, allowing a more complete history of AP’s tweet content. Keywords used for extracting data included the format: “from:AP since:2012-01-01 until:2012-12-31”, etc.

Keywords used for extracting non-AP data included the format: “-from:AP”. Additional targeted non-AP data was extracted, including 100 tweets from “-from:AP obama”, “-from:AP breaking”, and “-from:AP explosions”. Since our target tweet shares these topics, this allows the algorithm to have knowledge about the domain.

Digitizing Tweets

To allow the machine learning algorithm to process the tweets, each tweet will need to be converted into a numerical format. There are a couple of different methods for doing this, such as TF*IDF, but the optimal method appeared to be word indexing.

First, the collection of tweets was separated into two portions: the training set, and the cross validation (CV) set. The training set would be used for all learning-based examples, while the CV set would be used for calculating accuracy scores.

A vocabulary was built off of the training set by tokenizing the text of the tweets and then using the porter-stemmer algorithm (Centivus.EnglishStemmer.dll) to obtain the collection of base distinct words.

We then digitize each tweet in the training set to an array of ints, corresponding to the word existing in the vocabulary. For each tweet, we check each word in the vocabulary and see if it exists in the current tweet. If the vocabulary word exists, we place a 1 for that index in the array. if it does not, we place a 0 for that index. The end result is a vector of size n, where n equals the number of terms in the vocabulary. This ensures that each training set item contains the same length n, consisting of a series of 0’s and 1’s. For example, if the vocabulary consists of 250 stemmed terms then each tweet will be converted into an array of 250 integers (giving us a matrix of m data rows, each of length 250).

Note, if TF*IDF (term frequency inverse document frequency) were used, the values in the array would instead by doubles. However, since the length of tweets is only 140 characters, it’s more difficult gathering value from term frequency relations within the text, thus indexing was used instead.

Proof That We’re Learning Something

Learning curves are an excellent way for telling if a machine learning algorithm is actually learning. By plotting the accuracy against the number of training set items, it becomes apparent whether the algorithm is learning as data examples grow, and if adding more data will actually help or hinder accuracy.

For machine learning algorithms in C# .NET, the Accord .NET library was used.

An initialization of an SVM can be done with the following code:

1 | MulticlassSupportVectorMachine machine = new |

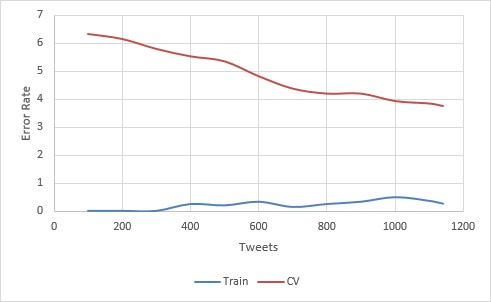

A First (Pretty Good) Attempt

For the first attempt, a support vector machine (SVM) with a gaussian kernel (sigma 2) was used. It achieved an accuracy of 99.74% Training and 96.22% CV on a training set of 1140 items.

This is pretty good. Especially, considering we’re only using 1140 training set items. The learning curve is also promising. Bias is virtually non-existent, and variance is kept to a minimum. Looking at the slope of the curve, it certainly appears that more data will only improve the accuracy. Still, we can do better.

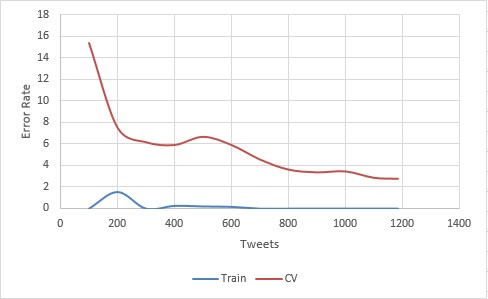

The Second (Even Better) Attempt

For the second attempt, the SVM was changed to use a linear kernel, achieving an accuracy of 100% Training and 97.21% CV.

Now we’re cooking! This bumps our accuracy up 1% and the slope appears just as sharp, meaning that more data could push the accuracy up even further.

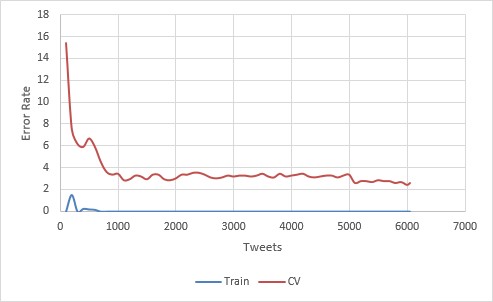

It’s time to feed our C# .NET machine learning algorithm more data and see what it can do. The data was increased to a training set size of 6,054 tweets.

Results?

The best algorithm was trained on 6,054 tweets. Roughly half were authored by AP, and the rest were authored by other users.

The program achieved a final accuracy of 100% Training, 97.38% CV, 96.23% Test. Judging by the learning curve, it looks like there is still some room to go even further, by providing more training examples.

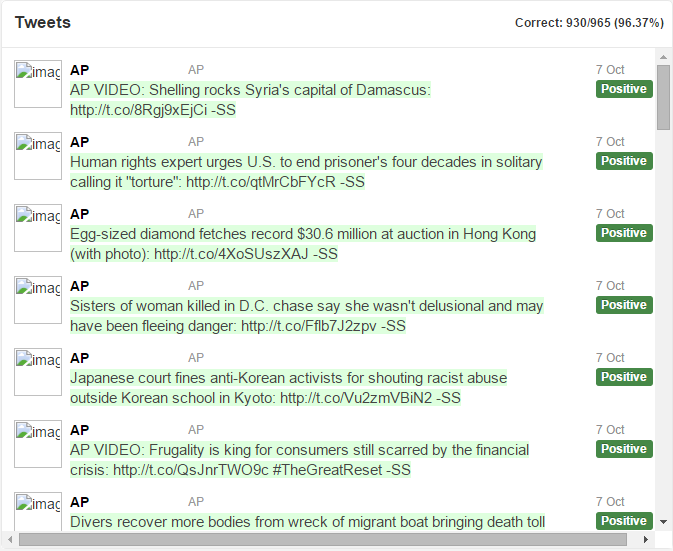

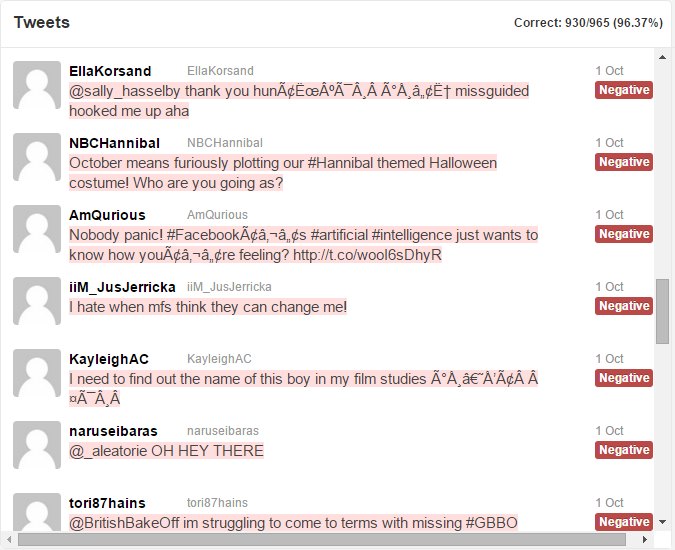

Here is a view of the resulting program running on real live data (test set). The program never saw these tweets before in its whole life. Honest!

You can download the full test results, in all their glory, to view all 965 tweets.

Notice in the test results, the majority of the tweets by AP are correctly marked positive and colored in green. The tweets by other users are correctly marked negative and colored in red. Some errors slip through (around 3%), but the results by the computer are impressive.

If you scroll to the very bottom of the test set, you’ll come to our suspect tweet, correctly colored in red:

Conclusion

This year has seen some significant advances in big-data analysis, and in particular, machine learning. With the increasingly massive amounts of data being passed through computers every day, it’s becoming more and more difficult for humans to keep pace. Luckily, faster processors and smarter algorithms are allowing us to make sense of it all; and may in fact, end up taking over much of what we do today.

Machine learning and artificial intelligence are exciting parts of computer science that are growing in importance as more data is collected. This article has provided a short introduction to the power of machine learning and, possibly, a hint of what’s to come in the future.

Interested in more? You can read about the other things I’ve done to help make computers smarter.

About the Author

This article was written by Kory Becker, software developer and architect, skilled in a range of technologies, including web application development, machine learning, artificial intelligence, and data science.

Sponsor Me